Distributed systems fail in distributed ways. A 500 from order-service might trace back to a connection error three hops away, wrapped and re-thrown by the time it reaches the surface. Three separate log streams, three separate grep sessions, no common thread. The question “what went wrong?” becomes “in which of the four possible places did it go wrong?” — and the answer requires correlating timestamps across services by hand.

Observability solves this at the root. Not by adding more logging, but by linking everything — logs, metrics, traces — with a single identifier that flows through every service that handled the request. When something fails, one ID takes you from the log line to the full distributed call tree to the relevant latency metrics, in seconds.

This article builds that stack for a hybrid Java / Python / LangChain microservices setup on Minikube, using OpenTelemetry as the instrumentation layer and the Grafana LGTM stack as the backend. A reference project with three deployable services and a complete Makefile is linked in the quick reference below — make all starts the entire stack from scratch.

The three pillars and the thread between them

Observability is commonly described as having three pillars. They matter independently, but they become something qualitatively different when they are connected.

Logs are the raw record of what happened inside a service — every decision, every call, every error. The problem with traditional logging is that each service writes to its own stream. When a request touches five services, finding the relevant log lines means knowing exactly when the request happened and running five separate searches by timestamp — slow, error-prone, and entirely manual.

Metrics are aggregations over time: request rate, error rate, P95 latency. They tell you something is wrong and roughly where. They do not tell you what the specific failing request looked like, or what triggered the anomaly.

Traces are the timeline of a single request as it flows through the system. A distributed trace records every service call, every downstream request, every database query that a given user interaction triggered — with timing, parent-child span relationships, and service-level attributes attached. This is where the correlation ID lives.

The correlation ID is the key that connects all three. In this stack, OpenTelemetry generates a globally unique 128-bit trace_id for every incoming HTTP request. That ID is:

- propagated automatically to every downstream HTTP call via the W3C

traceparentheader - injected into the logging context of every log line written during that request

- attached to every span in the distributed trace stored in Tempo

A single query — {namespace="apps"} | json | traceId="4bf92f35..." in Loki — returns every log line, from every service, for that specific request. A single click on the traceId field opens the full Tempo trace. The investigation that used to take twenty minutes takes thirty seconds.

Stack choice: LGTM + OpenTelemetry

The Elastic Stack (Elasticsearch, Logstash, Kibana + Elastic APM) was the reference architecture for this kind of setup for most of the 2010s and is still a valid option. But it carries two costs that compound on Kubernetes.

First, Elasticsearch changed its licence to SSPL in 2021 — not open source in the OSI sense. Self-hosting on cloud infrastructure requires navigating that constraint; managed offerings are commercial. The Grafana LGTM stack is Apache 2.0 throughout.

Second, Elasticsearch indexes the full text of every log line by default, which makes it excellent for unstructured search but resource-intensive as a persistent workload on Kubernetes. Loki takes a fundamentally different approach: it indexes only metadata labels, not log content, and delegates full-text filtering to query time. For structured JSON logs where you filter by traceId or level — which is exactly what this stack produces — the tradeoff is irrelevant and the resource footprint is an order of magnitude smaller.

The four components of this stack:

- OpenTelemetry Collector — receives OTLP spans from all services, batches and forwards them to Tempo. Deployed as a Kubernetes

Deploymentin theobservabilitynamespace. - Grafana Tempo — stores and queries distributed traces. Accepts OTLP gRPC on port 4317.

- Grafana Loki + Promtail — Promtail runs as a

DaemonSetand scrapes Kubernetes pod logs; Loki extracts thetraceIdfield as a label on ingest, enabling direct correlation with Tempo. - Prometheus + Grafana — Prometheus scrapes

/actuator/prometheus(Spring Boot) and/metrics(FastAPI) via pod annotations. Grafana is the unified UI for all three backends.

Architecture

┄ ┄ ┄ all three services share the same trace_id via W3C traceparent ┄ ┄ ┄

Traces every service ──OTLP──► otel-collector ──OTLP gRPC──► Tempo Metrics Prometheus ◄── scrape ─ /actuator/prometheus · /metrics Logs Promtail ────────────── K8s pod stdout (JSON) ─────────► Loki traceId extracted as label on ingest │ ▼ Grafana · logs ↔ traces ↔ metrics

The OTEL Collector is the only component that the application services need to reach. It runs in the observability namespace and is exposed as a ClusterIP service. Prometheus and Promtail pull from the services directly — no sidecar agent required.

One detail worth noting: Spring Boot’s OTLP exporter uses the HTTP/protobuf transport on port 4318, while the Python services use the gRPC transport on port 4317. Both are received by the same OTEL Collector — it listens on both protocols simultaneously.

The reference project

The cluster setup follows the pattern from the Minikube profiles article: each project gets its own named Minikube profile, its own kubectl context, and an exportable standalone kubeconfig. The make cluster-start target creates the observability-demo profile and automatically exports its context to ~/.kube/observability-demo.yaml.

# 1. Full bootstrap — cluster + infra + build + deploy

make all

# 2. Forward all UIs and service ports to localhost

make port-forward # Grafana :3000 Prometheus :9090 services :8080–8082

# 3. Generate traffic to produce traces, logs, and metrics

make seed # 10 orders through the full chain

# 4. Open k9s on this cluster (two equivalent forms)

make k9s

# k9s --context observability-demo

# k9s --kubeconfig ~/.kube/observability-demo.yaml

# 5. Per-session isolation — all kubectl commands in this terminal hit this cluster

export KUBECONFIG=~/.kube/observability-demo.yaml

Every kubectl and helm command in the Makefile uses --context=observability-demo and --kube-context=observability-demo respectively, so the Makefile is safe to run regardless of which context is currently active in ~/.kube/config.

Instrumentation: Spring Boot

Spring Boot 3.x and Micrometer Tracing handle most of the instrumentation automatically. Three dependencies are all that is needed:

<!-- Bridges Micrometer's tracing API to the OpenTelemetry SDK -->

<dependency>

<groupId>io.micrometer</groupId>

<artifactId>micrometer-tracing-bridge-otel</artifactId>

</dependency>

<!-- Exports spans via OTLP HTTP/protobuf to the OTEL Collector -->

<dependency>

<groupId>io.opentelemetry</groupId>

<artifactId>opentelemetry-exporter-otlp</artifactId>

</dependency>

<!-- Exposes /actuator/prometheus for Prometheus scraping -->

<dependency>

<groupId>io.micrometer</groupId>

<artifactId>micrometer-registry-prometheus</artifactId>

</dependency>

With these on the classpath, application.yml points the exporter at the OTEL Collector and enables 100% sampling:

spring:

application:

name: order-service

jackson:

property-naming-strategy: SNAKE_CASE

management:

tracing:

sampling:

probability: 1.0

otlp:

tracing:

endpoint: http://${OTEL_COLLECTOR_HOST:otel-collector.observability.svc.cluster.local}:4318/v1/traces

endpoints:

web:

exposure:

include: health,prometheus

The port matters: 4318 is the OTLP HTTP/protobuf port. Spring Boot’s OTLP exporter uses HTTP transport, not gRPC. Port 4317 is gRPC — pointing to it produces silent connection failures with no obvious error message.

For structured JSON logs with the trace ID, logstash-logback-encoder reads the traceId and spanId keys that Micrometer Tracing writes to MDC automatically:

<appender name="CONSOLE" class="ch.qos.logback.core.ConsoleAppender">

<encoder class="net.logstash.logback.encoder.LogstashEncoder">

<customFields>{"service":"${appName}"}</customFields>

<includeMdcKeyName>traceId</includeMdcKeyName>

<includeMdcKeyName>spanId</includeMdcKeyName>

</encoder>

</appender>

Every log line produced during a request now includes "traceId":"4bf92f35..." as a JSON field. Promtail extracts this field as a Loki label on ingest — no extra configuration on the application side.

The distributed trace propagation is also automatic. When order-service calls inventory-service via Spring’s RestClient, the W3C traceparent header is injected by the auto-configured observation infrastructure. The downstream service receives the same trace_id and continues the trace as a child span. No manual header passing.

The error handler for this service follows the pattern from the exception handling note: a @RestControllerAdvice that logs the full stack trace internally and returns a structured {"code", "message"} response — never the raw stack in the body.

Instrumentation: FastAPI

Five packages cover tracing, metrics, and structured logging in Python:

opentelemetry-sdk>=1.25.0

opentelemetry-instrumentation-fastapi>=0.46b0

opentelemetry-exporter-otlp-proto-grpc>=1.25.0

prometheus-fastapi-instrumentator>=6.1.0

structlog>=24.2.0

At startup, the tracer provider is initialized and the FastAPI app is instrumented:

from opentelemetry import trace

from opentelemetry.sdk.resources import Resource

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.grpc.trace_exporter import OTLPSpanExporter

from opentelemetry.instrumentation.fastapi import FastAPIInstrumentor

resource = Resource.create({"service.name": SERVICE_NAME})

tracer_provider = TracerProvider(resource=resource)

tracer_provider.add_span_processor(

BatchSpanProcessor(

OTLPSpanExporter(endpoint=f"{OTEL_COLLECTOR_HOST}:4317", insecure=True)

)

)

trace.set_tracer_provider(tracer_provider)

app = FastAPI()

FastAPIInstrumentor().instrument_app(app)

Unlike Spring Boot, the Python exporter uses gRPC on port 4317 (not HTTP on 4318). The OTEL Collector accepts both; the application does not need to know which other services use which transport.

For structured logging with the correlation ID, structlog needs one custom processor that reads from the active OTEL span context at log time:

import structlog

from opentelemetry import trace

def add_otel_context(logger, method, event_dict):

span_context = trace.get_current_span().get_span_context()

if span_context.is_valid:

event_dict["traceId"] = format(span_context.trace_id, "032x")

event_dict["spanId"] = format(span_context.span_id, "016x")

return event_dict

structlog.configure(

processors=[

structlog.stdlib.add_log_level,

structlog.processors.TimeStamper(fmt="iso"),

add_otel_context,

structlog.processors.JSONRenderer(),

],

...

)

The field name traceId must match exactly what the Promtail pipeline stage extracts — and what Spring Boot’s logstash-logback-encoder writes. Consistent camelCase across all services ensures Loki uses the same label name everywhere and the correlation link in Grafana works regardless of which service produced the log.

For custom spans within a handler — for example, to measure a specific code path — the tracer is used directly:

tracer = trace.get_tracer(SERVICE_NAME)

@app.get("/inventory/{product_id}")

async def get_inventory(product_id: str):

with tracer.start_as_current_span("inventory.lookup") as span:

span.set_attribute("product.id", product_id)

# ... logic

The child span appears nested under the parent HTTP request span in Tempo, with its own duration and attributes.

Instrumentation: LangChain and agentic flows

LangChain operations — chain invocations, LLM calls, tool executions, agent steps — do not appear in OpenTelemetry traces by default. OpenInference from Arize AI fills this gap with OTEL-compatible instrumentation for the LangChain execution graph:

openinference-instrumentation-langchain>=0.1.19

One call after setting the global tracer provider instruments all LangChain operations:

from openinference.instrumentation.langchain import LangChainInstrumentor

trace.set_tracer_provider(tracer_provider) # must come first

LangChainInstrumentor().instrument()

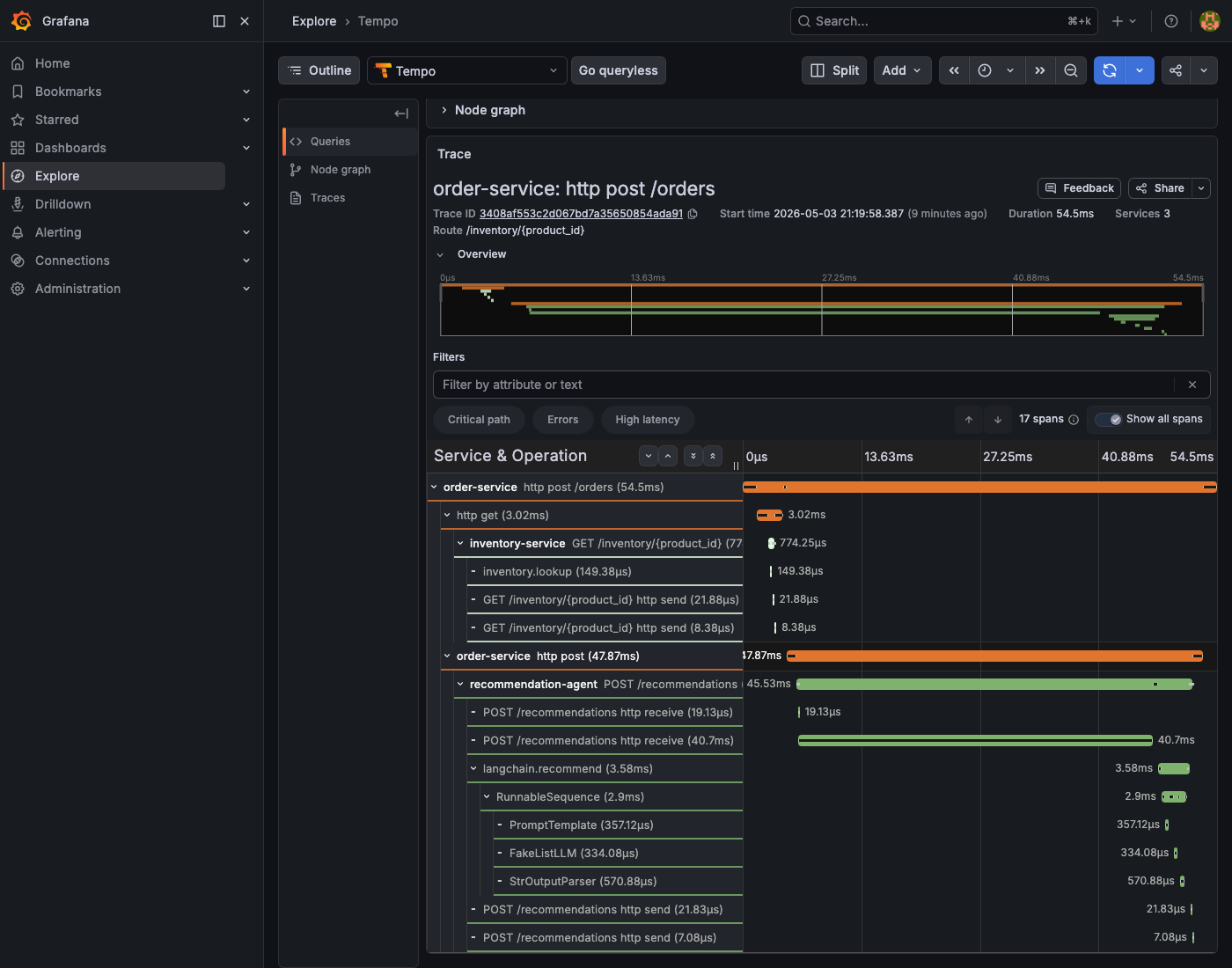

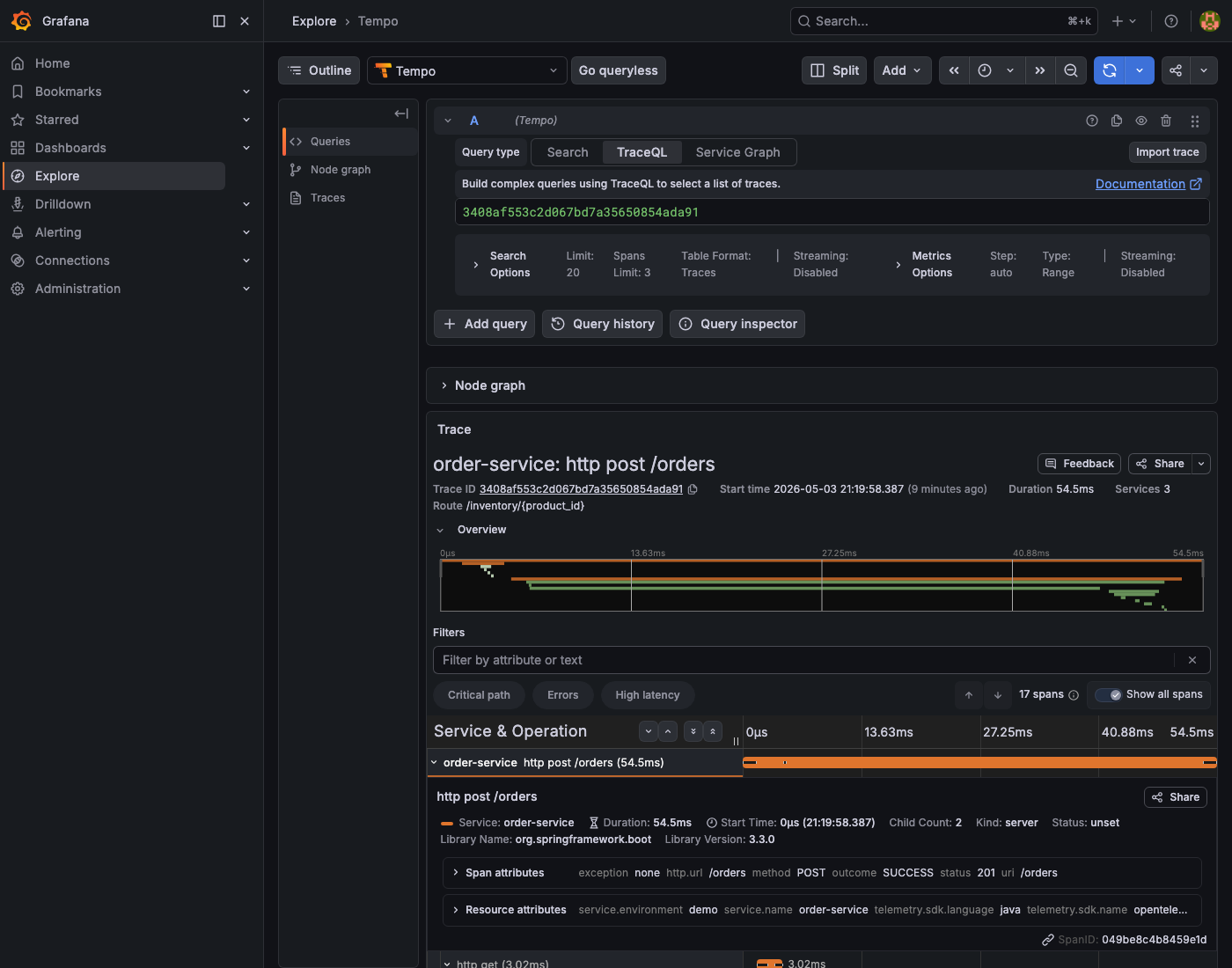

No other changes are needed. Every chain invocation now produces child spans under the parent request span automatically. In Tempo, a trace for a /recommendations call looks like this:

HTTP POST /recommendations (345ms)

└─ langchain.recommend (340ms) ← custom span from the handler

└─ langchain.chain (335ms) ← OpenInference

├─ langchain.llm (310ms) ← LLM call: prompt + completion + tokens

└─ langchain.output_parser (1ms)

With a real LLM (OpenAI, Anthropic), the langchain.llm span includes the full prompt, the completion text, and token counts — all visible in Tempo without adding instrumentation code. The FakeListLLM in the reference project produces the same span structure, so the wiring is identical regardless of which LLM is plugged in.

The agent_trace_id field in the /recommendations response returns the current trace ID as a string, so clients can include it in bug reports or pass it directly to a support dashboard:

def _current_trace_id() -> str:

span_context = trace.get_current_span().get_span_context()

return format(span_context.trace_id, "032x") if span_context.is_valid else "0" * 32

How the correlation ID propagates

Every HTTP request entering order-service through the FastAPIInstrumentor or Spring Boot’s auto-instrumentation gets a trace_id generated at the edge. When order-service calls inventory-service, the outgoing HTTP request carries this header:

traceparent: 00-4bf92f3577b34da6a3ce929d0e0e4736-00f067aa0ba902b7-01

│ │ │ └─ flags

│ └─ trace_id (128-bit hex) └─ parent span_id (64-bit)

└─ version

The receiving service — whether Spring Boot or FastAPI — reads this header automatically, extracts the trace_id, and continues the trace by creating a new child span under the same ID. The LangChain spans created by OpenInference are children of the FastAPI span. One trace_id runs from the first HTTP entry point through every service and every LLM call.

Structlog reads the trace_id from the active OTEL span context at the moment each log call is made. This means every logger.info(...) inside a request handler automatically includes it — no need to pass the ID through function arguments or thread-local state. Spring Boot’s MDC integration works the same way.

Navigating Grafana

Open http://localhost:3000 (admin / admin) after running make port-forward. Run make seed first to generate traces and logs to explore.

Following a request end to end:

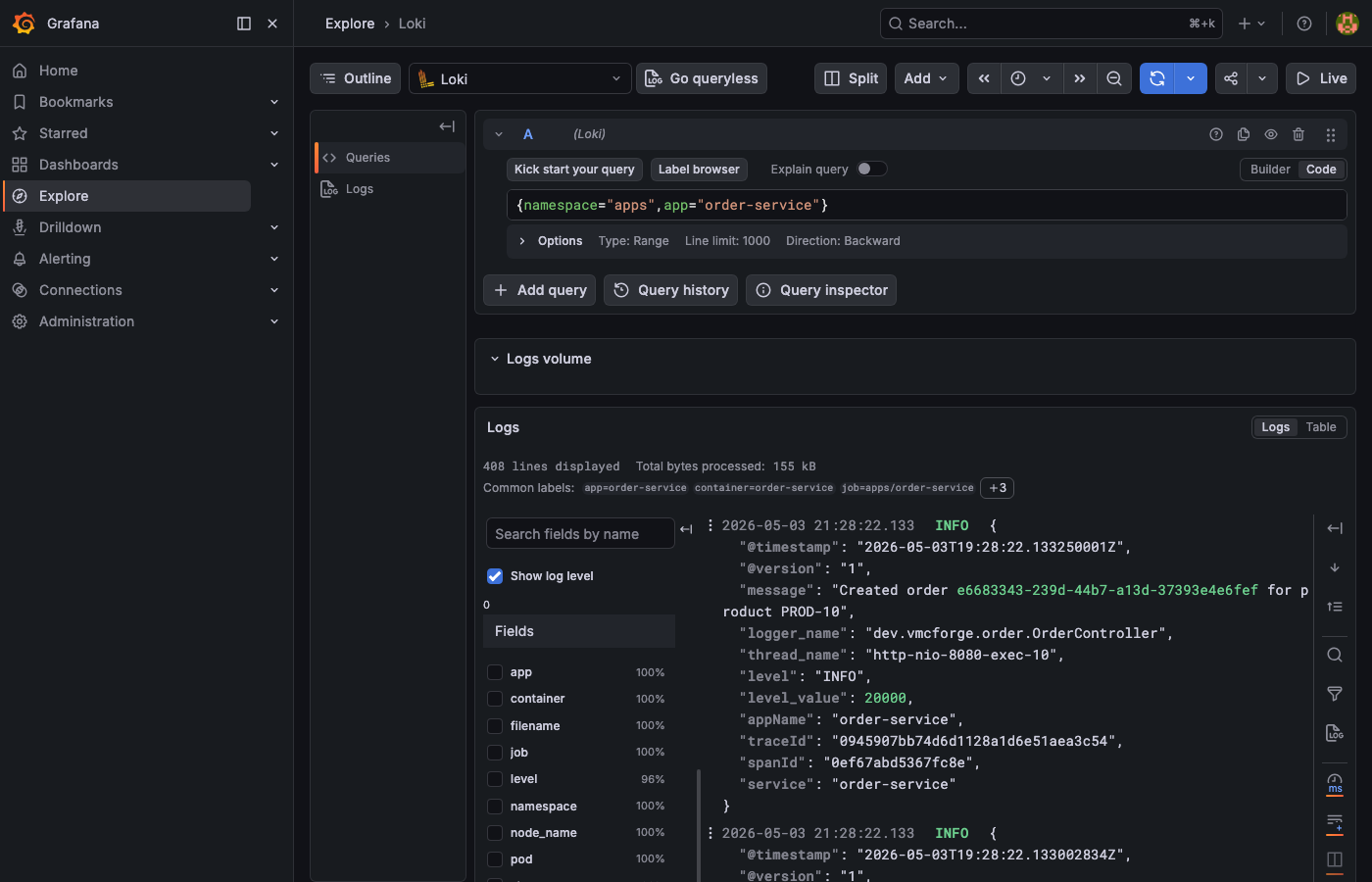

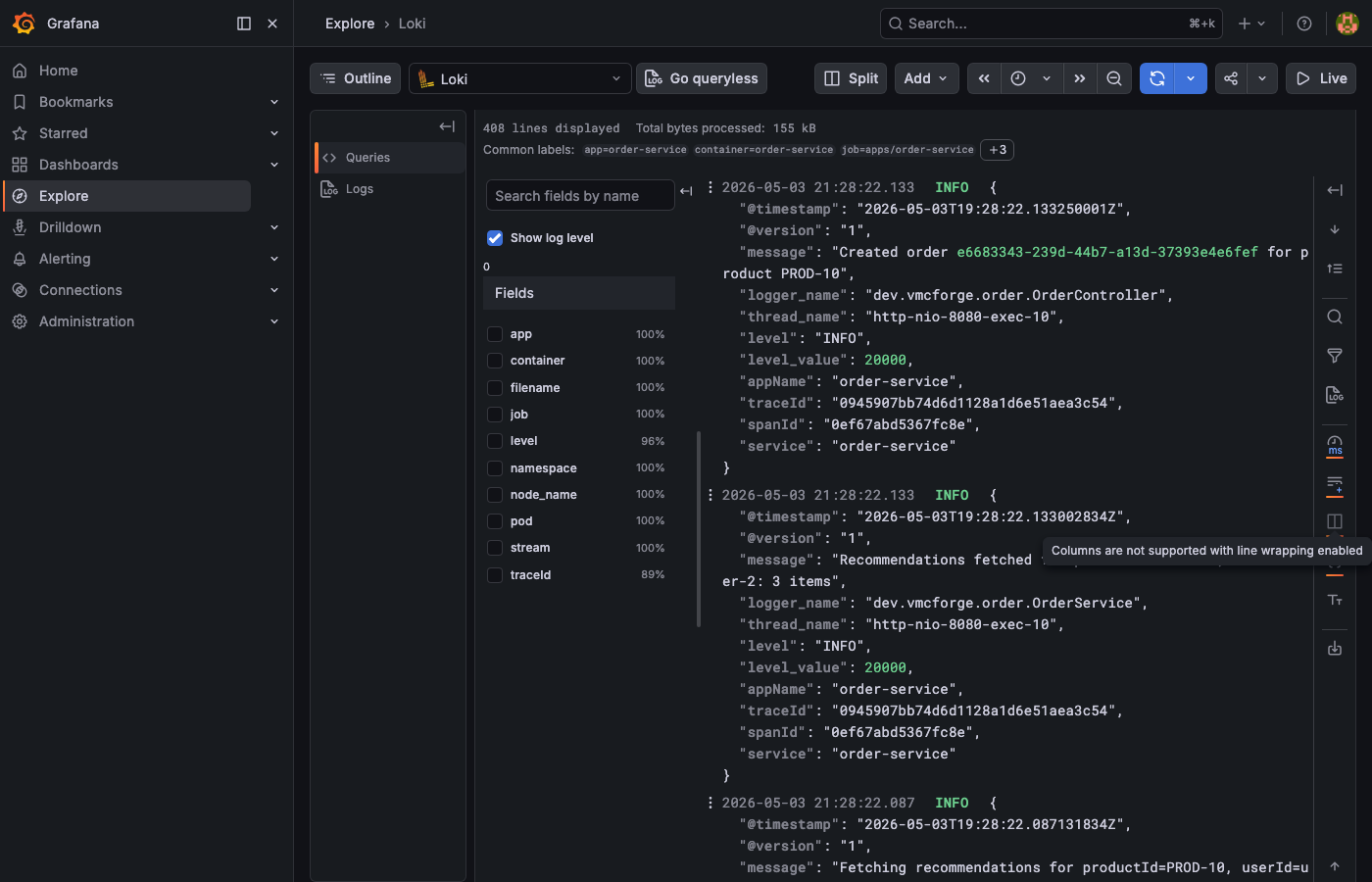

Loki. In the query field type {namespace="apps", app="order-service"} and run the query. You will see the log stream from the order service.

traceId field in the structured JSON. Click the Tempo link icon next to it to jump directly to that trace.

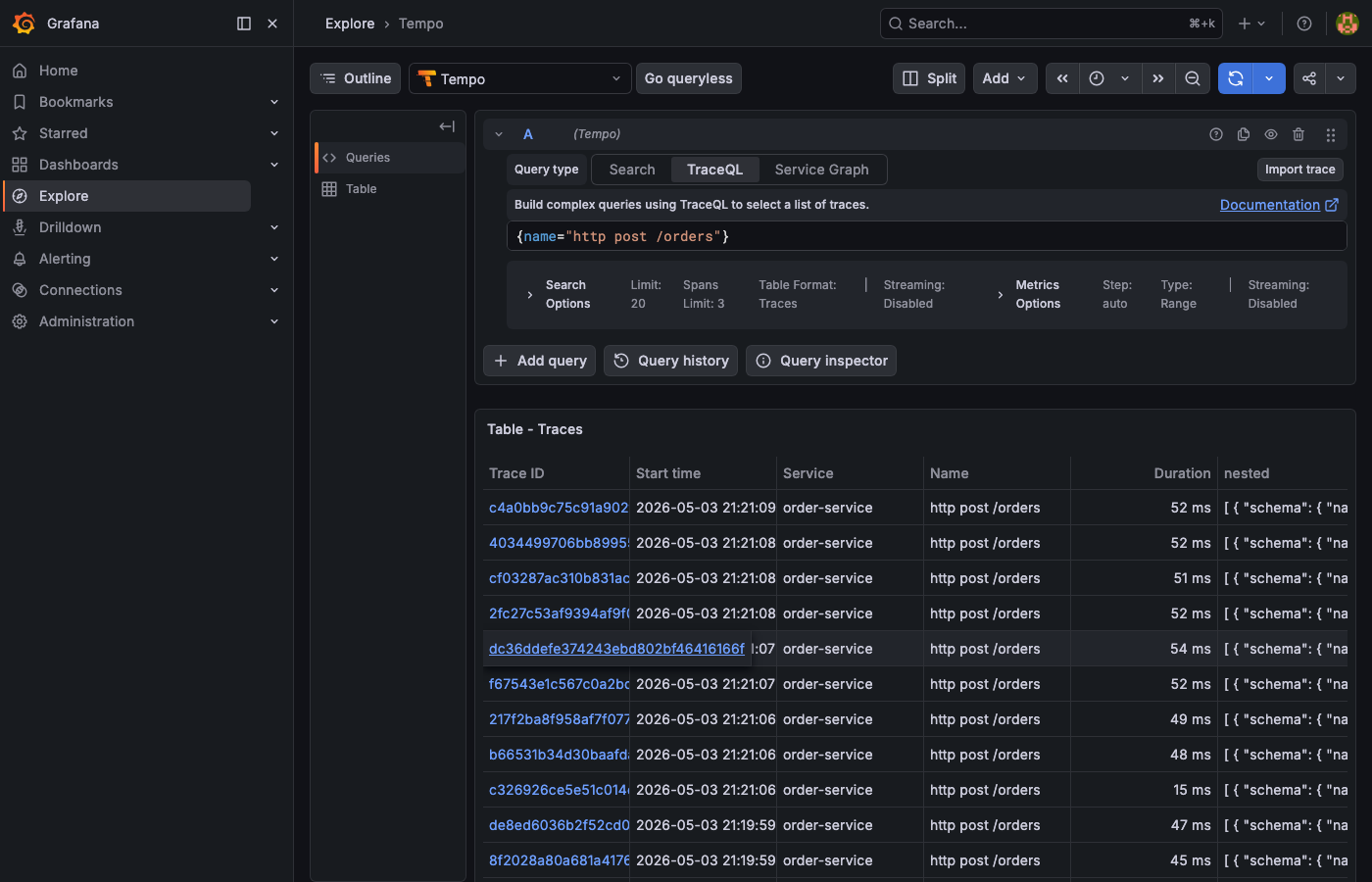

{name="http post /orders"}. The table lists every order trace with its duration, making it easy to spot outliers at a glance.

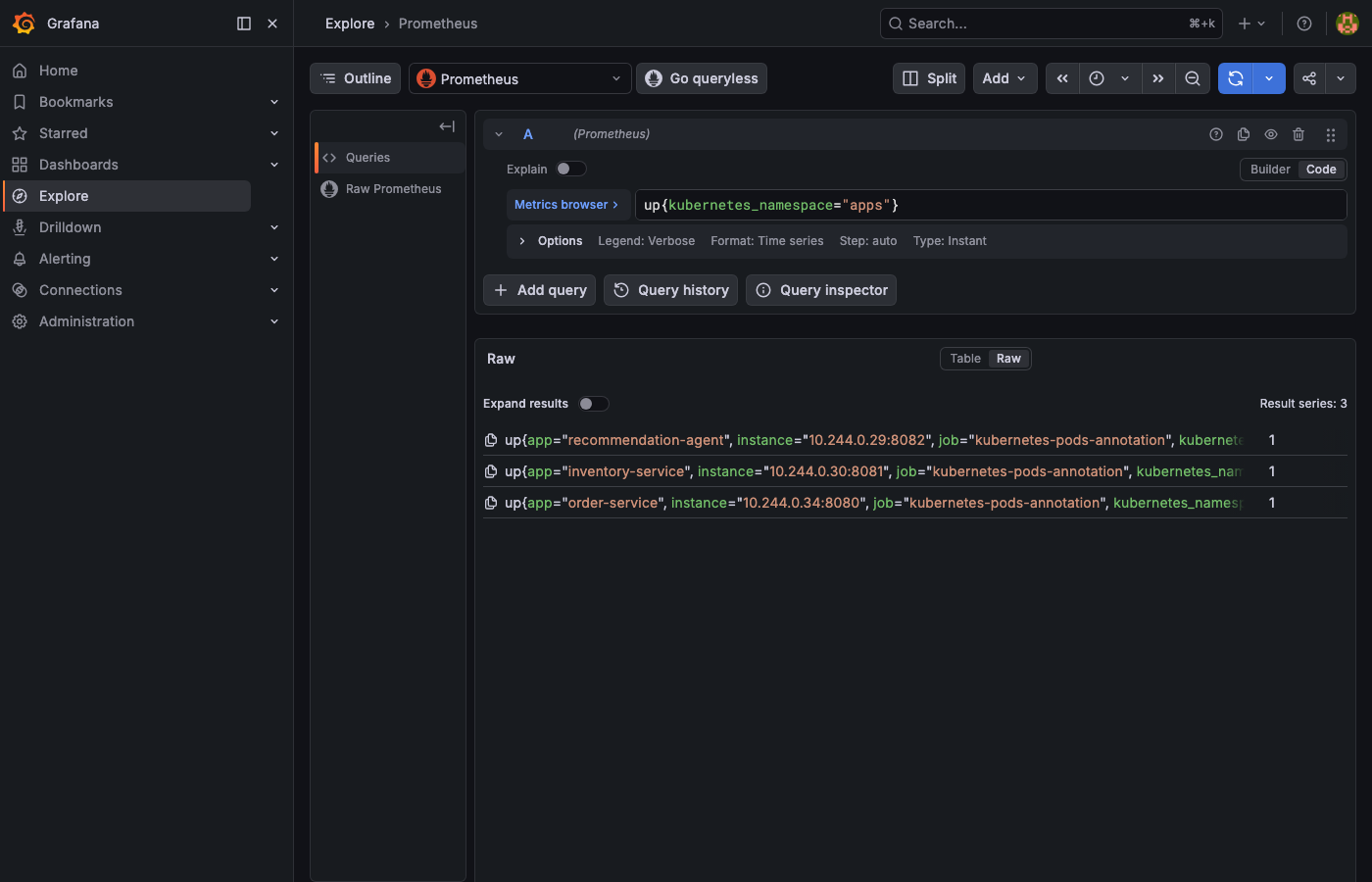

up{kubernetes_namespace="apps"}. You should see Result series: 3, one per service, each returning 1 (UP).

Useful Prometheus queries once metrics are flowing:

# Request rate per service (last 5 minutes)

sum(rate(http_server_requests_seconds_count{job="kubernetes-pods-annotation"}[5m])) by (app)

# P95 latency across all services

histogram_quantile(0.95, sum(rate(http_server_requests_seconds_bucket[5m])) by (le, app))

# Error rate (non-2xx responses)

sum(rate(http_server_requests_seconds_count{status!~"2.."}[5m])) by (app)

Useful Loki queries:

# All logs for a specific trace

{namespace="apps"} | json | traceId="<paste-trace-id>"

# Errors across all services

{namespace="apps"} | json | level="error"

# LangChain recommendation calls with output

{app="recommendation-agent"} | json | message="recommendation_response"

Quick reference

Makefile targets

cluster-start + infra-up + build + deployHelm install: kube-prometheus-stack + loki + tempo + otel-collectorbuild all images into Minikube Docker daemonkubectl apply all service manifests + wait for rolloutGrafana :3000 · Prometheus :9090 · services :8080–808210 POST /orders → full chain — generates traces + logs + metricsk9s --context observability-demorebuild images + rollout restart (use after code changes)kubectl logs --follow for the specified serviceminikube delete -p observability-demo + cleanupCluster context — Minikube profile isolation

minikube start -p observability-demo --cpus 4 --memory 8192kubectl --context=observability-demo get pods -Amake kubeconfig-export → ~/.kube/observability-demo.yamlexport KUBECONFIG=~/.kube/observability-demo.yamlk9s --context observability-demok9s --kubeconfig ~/.kube/observability-demo.yamlInstrumentation — transport and port by runtime

HTTP/protobuf → :4318/v1/traces/actuator/prometheusgRPC → :4317/metricsgRPC → :4317 + OpenInference/metricstraceId (camelCase — all services)annotation: prometheus.io/scrape=trueThe stack above handles the three-service demo cleanly. The natural extension is distributed tracing across services that run in separate namespaces or separate clusters — where the traceparent header crosses a network boundary managed by a service mesh like Istio or Linkerd. At that point the OTEL Collector becomes a pipeline with multiple exporters, and the Grafana datasources gain a fourth entry: the service mesh telemetry. That is a separate article.